Researching Multimodal Intelligence for Open-World Visual Understanding

Welcome to my academic homepage. My work focuses on multimodal large language models, vision-language learning, and open-vocabulary visual understanding across diverse real-world scenarios such as remote sensing and underwater environments.

About Me

I am a Ph.D. student at the University of Science and Technology of China (USTC), supervised by Prof. Xuelong Li.

My research focuses on applying multimodal large language models and vision-language models to visual tasks across diverse scenes. I am particularly interested in open-vocabulary segmentation, multimodal reasoning, and domain-oriented visual intelligence.

Research Topics

I work at the intersection of multimodal learning, visual understanding, and domain-specific intelligence.

Multimodal LLMs

Vision-language models, multimodal reasoning, and foundation models for general-purpose visual intelligence.

Computer Vision

Open-vocabulary segmentation, semantic understanding, instance segmentation, and video understanding.

Domain Applications

Remote sensing vision, underwater vision, and robust multimodal perception in challenging environments.

News

-

2026.03Four papers are accepted by CVPR 2026 (2 first-author, 1 second-author, and 1 fourth-author paper)! 🎉

-

2025.11One paper is accepted by AAAI 2026 (Oral)! 🎉

-

2025.10Awarded the National Scholarship for Graduate Students (研究生国家奖学金). 🎖️

-

2025.04StitchFusion is accepted by ACM MM 2025 (Oral).

-

2024.09Started my Ph.D. journey at USTC.

Research Highlights

Full publication list is available on Google Scholar.

We propose a novel framework that seamlessly integrates arbitrary visual modalities to improve multimodal semantic segmentation.

We develop an unbiased multiscale modal fusion framework for multimodal semantic segmentation.

We investigate efficient open-vocabulary segmentation approaches tailored to remote sensing imagery.

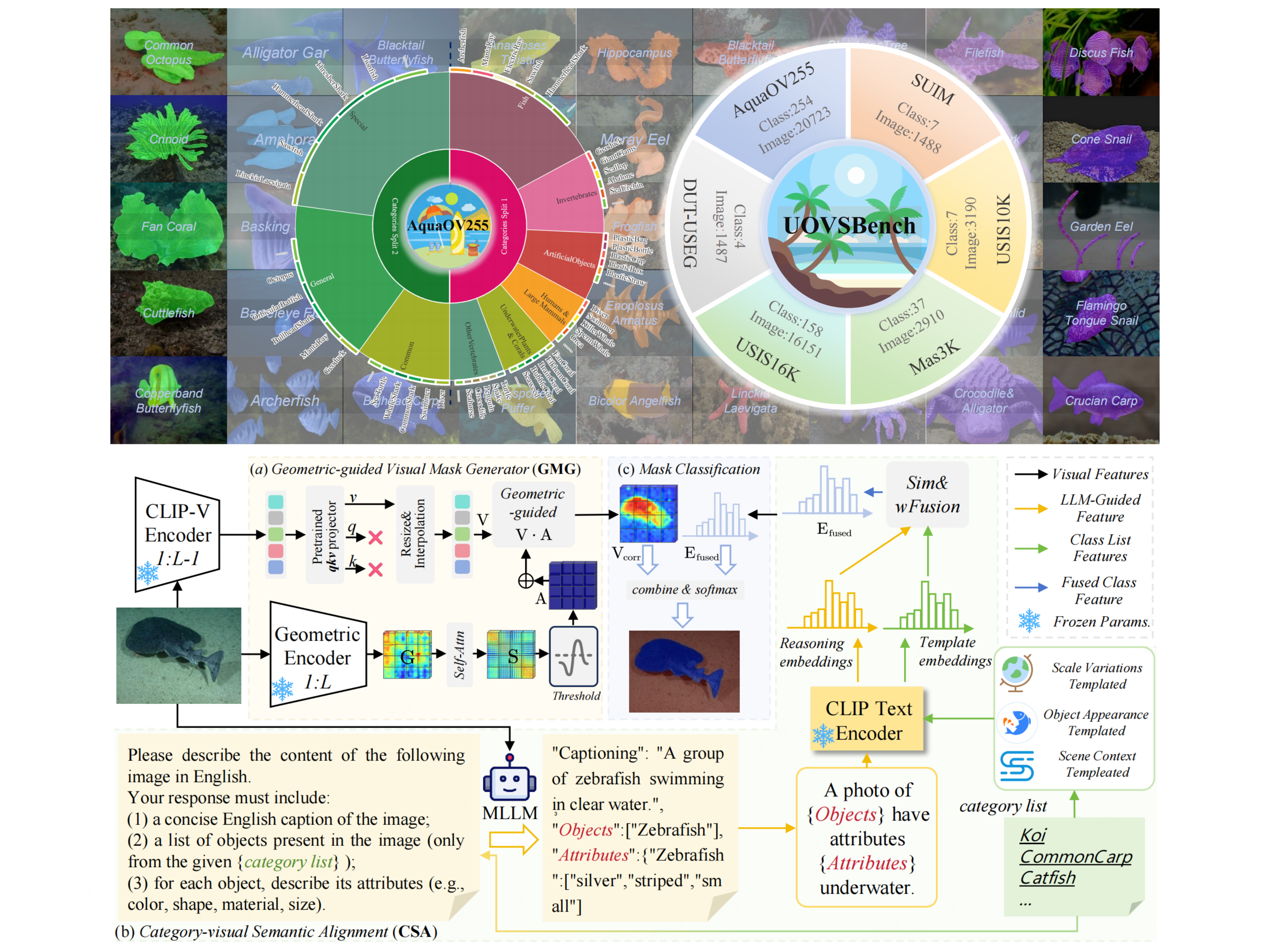

This work introduces MARIS, a benchmark and method for open-vocabulary instance segmentation in marine environments.

We explore training-free segmentation methods for underwater scenes, enabling effective transfer without additional supervision.

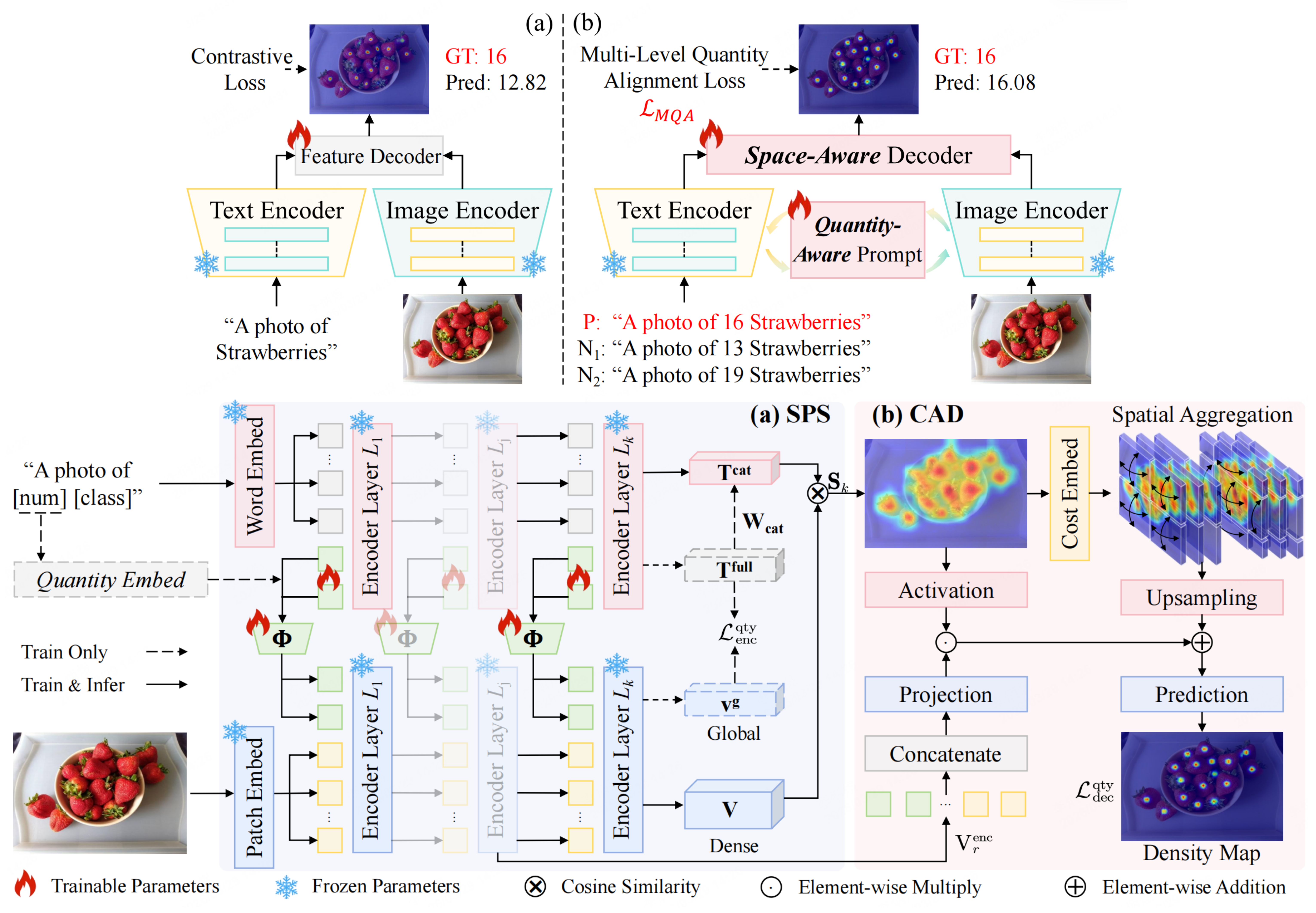

We enhance zero-shot object counting by improving both quantitative reasoning and spatial awareness.

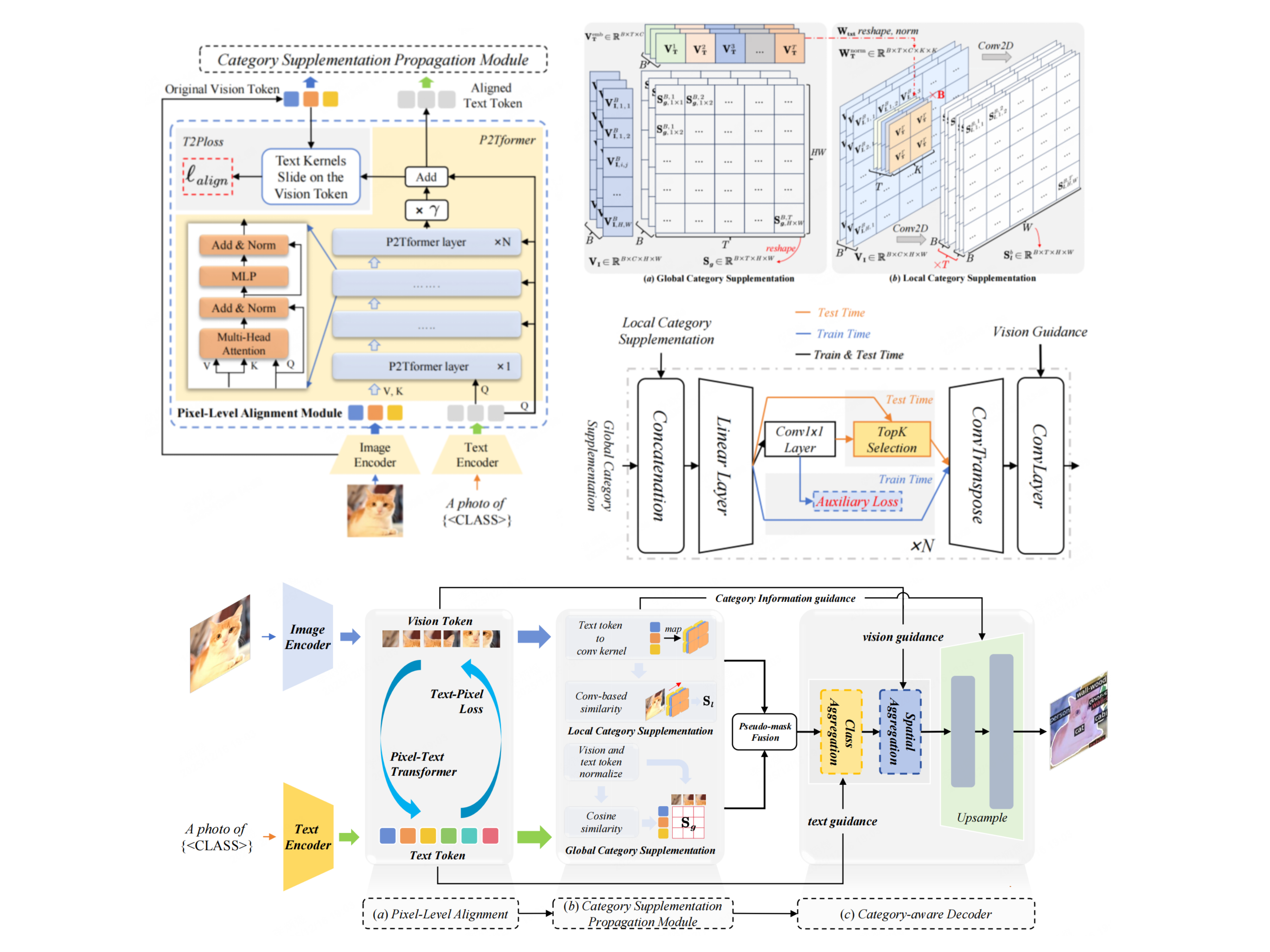

We propose a fine-grained pixel-text alignment framework for open-vocabulary semantic segmentation.

Honors and Awards

- 2025, National Scholarship for Graduate Students | 研究生国家奖学金

Academic Service

Reviewer — Journals

- TGRS

- Pattern Recognition (PR)

- More journals in related areas

Reviewer — Conferences

- CVPR

- NeurIPS

- ICLR

- Other major conferences